I spent one afternoon turning Claude Code into something that talks back. No API key. No rate limit. No surveillance.

I grew up watching Star Trek. The characters on the bridge of the Enterprise never typed at the computer. They asked it. The computer answered. It read them log entries while they did other things. It rendered diagrams when asked. There was no scrolling, no copy paste, no waiting for a tab to load. What I built is a tiny first step toward that.

I love using Claude. It is genuinely the best coding assistant I have used. But I do not believe any single AI company is a long term plan for the way I want to work. Companies pivot. APIs get rate limited and I am not a fan of monopoly.

If your daily workflow is hard wired to one vendor's cloud, you would eventually start renting the air you breathe

That is why this project leans hard on locally hosted weights. If Anthropic vanishes tomorrow or I want to stop using them, I may lose the chat interface I love. But the voice, the diagram viewer, the orchestration, the hotkeys, all of it remains working. I am liking to build more on the engineering for a future you do not control.

How it works?

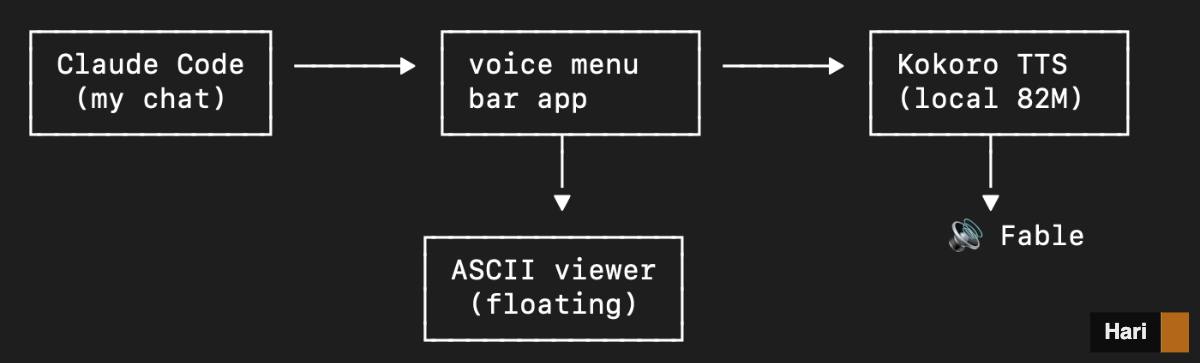

A small Python app that lives in my menu bar, listens to whatever Claude writes, and reads it aloud in a calm voice. It also notices when Claude draws an ASCII diagram, pops it into a floating window, and narrates the surrounding prose. Everything runs on the laptop. No cloud. No subscription. No data leaving the machine.

A small text to speech model called Kokoro produces the voice. A tiny one billion parameter Llama summarises anything genuinely long before(>1500 words) before it hits the speaker. A daemon called skhd watches my keyboard for shortcuts, so I can pause, resume, mute, or cycle voices without touching the mouse. That is the entire stack.

I kept thinking, while building it, about how strange this would have looked five years ago. A single person, one Saturday, wiring together a neural voice and a neural language model and a multi process orchestrator and a global hotkey daemon, and the whole thing fits inside the RAM of a MacBook Air. The fact that all of this happens with no API key, no rate limit, no surveillance, is the part that still feels like science fiction to me.

The computer in your terminal can be custom built for you

There is a quiet age happening here, where the boundary between software and tool is dissolving. You stop downloading apps and start composing them. The components are small, the scripting is short, and the result is something that nobody else has, because nobody else needs exactly your setup. I am starting to think that that the AI is not the product anymore. The AI is becoming more like a material. I am open to more ideas and thoughts on this.